The New Power Triangle Shaping AI Compute | TWS #057

SpaceX, Cursor, and Anthropic walk into a bar...

Every week, get the latest curated signals about how AI is impacting society & culture. Paid subscribers get full access to all newsletters, deep-dives, essays, field guides, and curated podcast Q&A notes. Also, please complete our anonymous survey so we can better gauge your content interests and better cater deals & discounts for you. Thank you!

It’s funny how the tech world works.

I get this feeling there’s been a subtle but massive structural shift happening lately. Everyone is hyper-focused on which frontier model is winning the benchmark game, but there’s also another game being played…

There’s an emerging pattern on the rise across these hyperscalers.

To understand this pattern, the best case study is to observe the moves SpaceX has taken over the past couple of months.

What Happened?

Three deals dropped in rapid succession that, on the surface, look like separate news items, but they’re actually not.

Let’s go through them:

Deal 1: Musk announced that xAI will be “dissolved as a separate company” and folded into SpaceX under a new name: SpaceXAI. The plan is that xAI disappears as a separate entity, and all its people, tech, and products move under the SpaceX corporate umbrella. SpaceXAI becomes the brand for all of SpaceX’s AI efforts, including their Grok model & chatbot (we’ll touch on this later), Grokipedia, and Grok Imagine.

Deal 2: SpaceX then struck a partnership with Cursor — the AI-native code editor that every developer and their dog is now using. This was structured as a call option. Basically, it means that SpaceX can acquire Cursor outright for $60 billion later this year, or simply pay a $10 billion breakup fee to keep the partnership alive without the acquisition. Either way, Cursor gets access to the Colossus supercomputer cluster model for training and possibly helps with Grok’s coding abilities.

Deal 3: Anthropic signed an agreement to take over all of the compute capacity at SpaceX’s Colossus 1 data center in Memphis, Tennessee. This would be over 300 megawatts of power and more than 220,000 NVIDIA GPUs coming online within the month. This means that all of you who use Claude Pro and Max should see immediate capacity improvements under these plans.

If you read these together, the picture becomes pretty clear.

SpaceXAI wants to position itself as this sort of compute landlord of the AI industry, and this would certainly be an interesting pivot.

Behind the Scenes

For SpaceX, the OG plans were initially to merge xAI into SpaceX with the goal of building a frontier AI company to compete with OpenAI’s GPT and Anthropic’s Claude models. For them, Grok was going to be the model.

But fast forward three months, and two senior product engineering leads from Cursor have already joined SpaceXAI to enhance Grok’s coding capabilities from the ground up, including other pursuits around their orbital data centers. Meanwhile, it’s fair to say that Grok still isn’t as competitive with Claude or GPT on most coding benchmarks. And now SpaceXAI is renting out their data centers that were supposed to power Grok.

There’s also the interesting dynamic (and tension) between Musk and OpenAI.

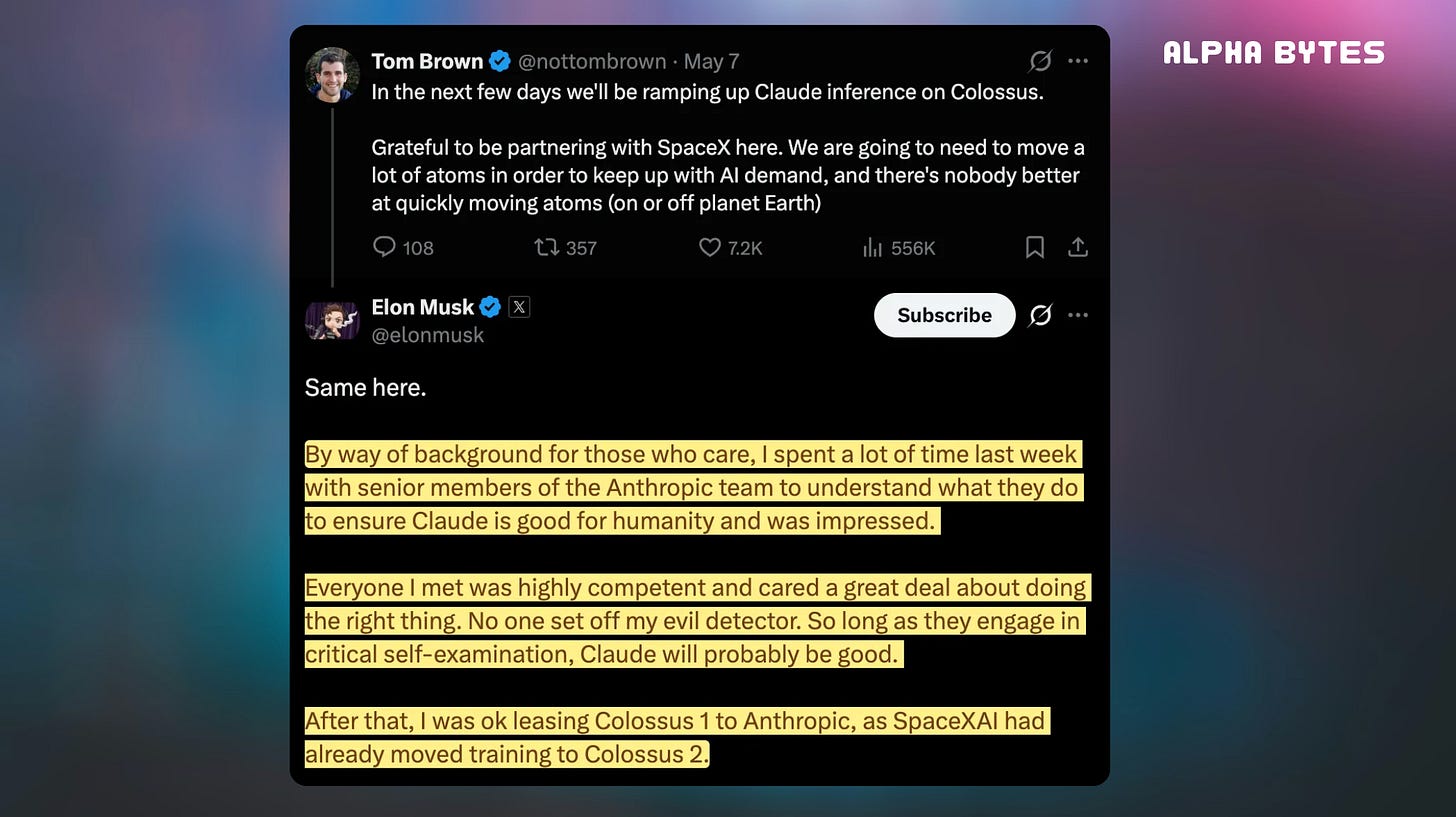

After spending a week in court testifying against Sam Altman, he posted that he’d also spent time with senior Anthropic team members and was “impressed.” He said, “Everyone I met was highly competent and cared a great deal about doing the right thing. No one set off my evil detector.”

I reckon that’s the most pragmatic thing Musk has said about AI all year. In a way, it sounds like he has a soft spot for Anthropic now, realizing that it’s the company that he wanted OpenAI to be from right from the beginning.

The Benchmark Reality Check

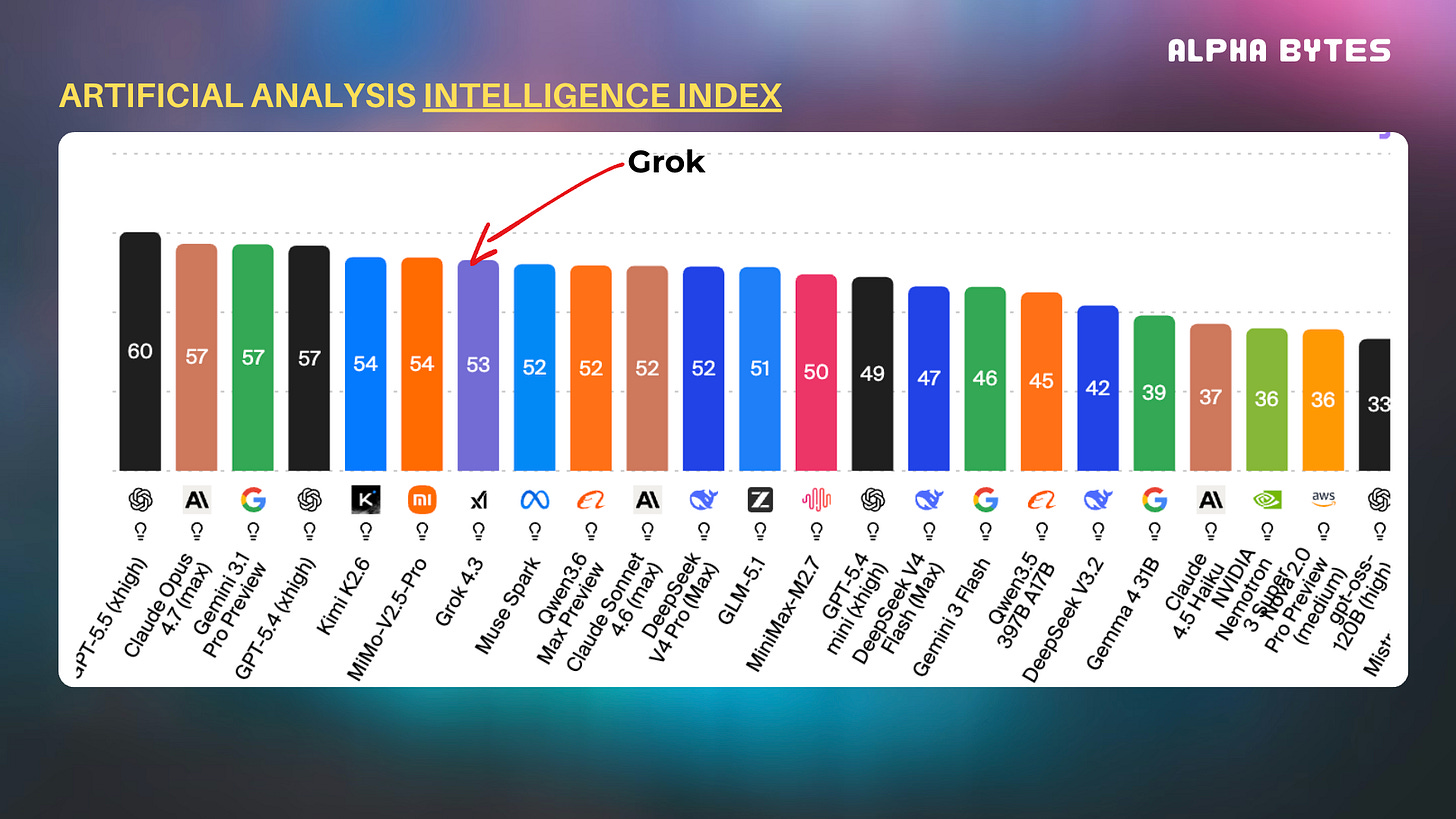

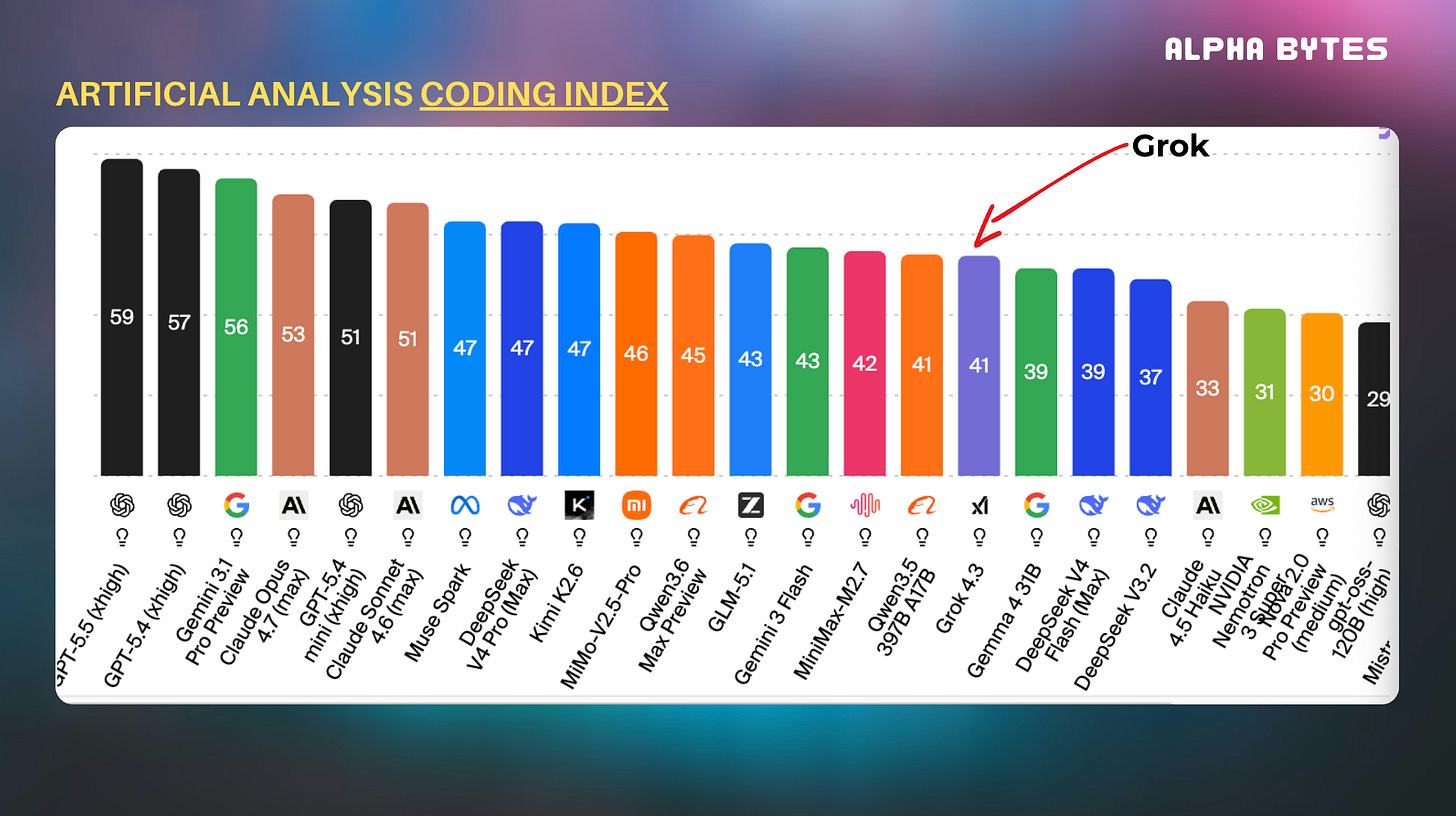

On a more technical note, if you look at the recent data and analysis of Grok, it’s quite sobering to say the least.

On the Intelligence Index, the latest Grok 4.3 sits at a score of 53. Not that it sounds bad until you realize that the frontier models from Anthropic (Opus 4.7) and OpenAI (GPT-5.5) are slowly pulling away.

But the Coding Index is where the gap really hurts, especially for a company trying to partner with Cursor. On software engineering benchmarks like SWE-bench, Claude’s latest models are consistently hitting rates around 87-93%. So Grok 4 has effectively fallen into the mid-tier pack. It’s even been beaten by Meta’s latest model — Muse Spark. Ouch.

At the end of the day, when you have a flagship model that’s trailing by a big margin in coding capabilities, the whole story around building towards AGI doesn’t really make sense anymore. So that gap explains probably why SpaceXAI had to poach Cursor’s engineering leads, and it could also be why the company is now moving to infrastructure rather than purely relying on Grok to win the model war.

Why this matters

You should read this pretty good analysis of the SpaceX + Cursor partnership over at Kwokchain, where Kevin Kwok frames the SpaceX-Cursor deal as “the search for a complete loop.” The idea is that to win in AI right now, you need three things: compute, models, and product.

No single company has all three locked down.

Cursor has arguably the best product in AI-assisted coding — deep workflow integration, agentic capabilities, and a developer community that borders on religious. But they’ve been compute-constrained. They couldn’t train frontier-scale models on their own infrastructure. On the other side, SpaceXAI actually has the compute (via Colossus 1) but lacks a product with enough user adoption to create that self-reinforcing data flywheel.

Remember about the call option structure that I mentioned above?

Well, SpaceX is about to go IPO, so doing a $60 billion acquisition can get tricky leading up to this massive event. The option structure lets both sides keep building independently while locking in optionality for later.

But the Anthropic deal is the emerging signal here, because Anthropic didn’t just buy some capacity at Colossus 1. They took pretty much all of it. And as part of the agreement, both companies showed interest in developing their model across their Orbital AI data centers when they’re ready.

What’s driving this

We’re starting to see the economics of the AI infrastructure layer becoming really clear. You see, Anthropic’s demand for Claude has grown so fast that they’ve been candid about the whole “inevitable strain” issue on their infra. Last month, users — especially Claude Code power users running long coding sessions were getting hammered by reliability issues during peak hours.

So now, Anthropic is focusing on accelerated scaling via the SpaceX deal, but also alongside an absolute warchest of compute partnerships Anthropic has been stacking up: a 5 GW agreement with Amazon, a 5 GW deal with Google and Broadcom (coming online 2027), and a $30 billion Azure commitment with Microsoft and NVIDIA. The total compute pipeline Anthropic is assembling is frankly absurd. But it has to be.

And there’s also another question that underpins all of this: what does SpaceX actually want to be?

The whole SpaceXAI rebrand suggests they probably haven’t abandoned the frontier model ambition entirely. But their actions might tell a different story. When you rent out your own data center to a competitor and structure a partnership with Cursor — who, by the way, still primarily uses Claude and other non-Grok models — you’re definitely signaling something…

This is where we might start to see SpaceX evolve into a neocloud, which is a vertically integrated infrastructure provider that sells compute rather than competing with them on the model layer.

It’s definitely not a bad strategy. The neocloud market is growing really fast.

—

The irony is palpable.

Anthropic was founded by executives who left OpenAI because they were worried about safety.

Musk sued OpenAI because he felt they’d abandoned their original mission.

Now, Anthropic is running Claude on the infrastructure that Musk built to compete against them, while Musk testifies against Sam Altman in court. LOL.

The AI industry’s relationship map looks less like a corporate org chart and more like a messy group chat. Competitors are becoming customers. Rivals are now landlords, and so on and so on…

But if we strip away all this drama, compute is now the critical bottleneck, and whoever controls the infrastructure controls the speed of the race.

SpaceX just figured out it’s more valuable, and probably more profitable, to be the landlord than to be yet another tenant trying to build a frontier model.

ICYMI:

Last Week’s Releases:

Thanks for reading so far 🙏🏼

If you liked the content so far, you can check out our full TWS archive, plus more in-depth content: under the hood and field guides.

I’d love to get some feedback. If you have some time, fill out the following survey.

Want to get in front of our audience? You can partner with us.

Upgrade to the premium version for full access to all content.

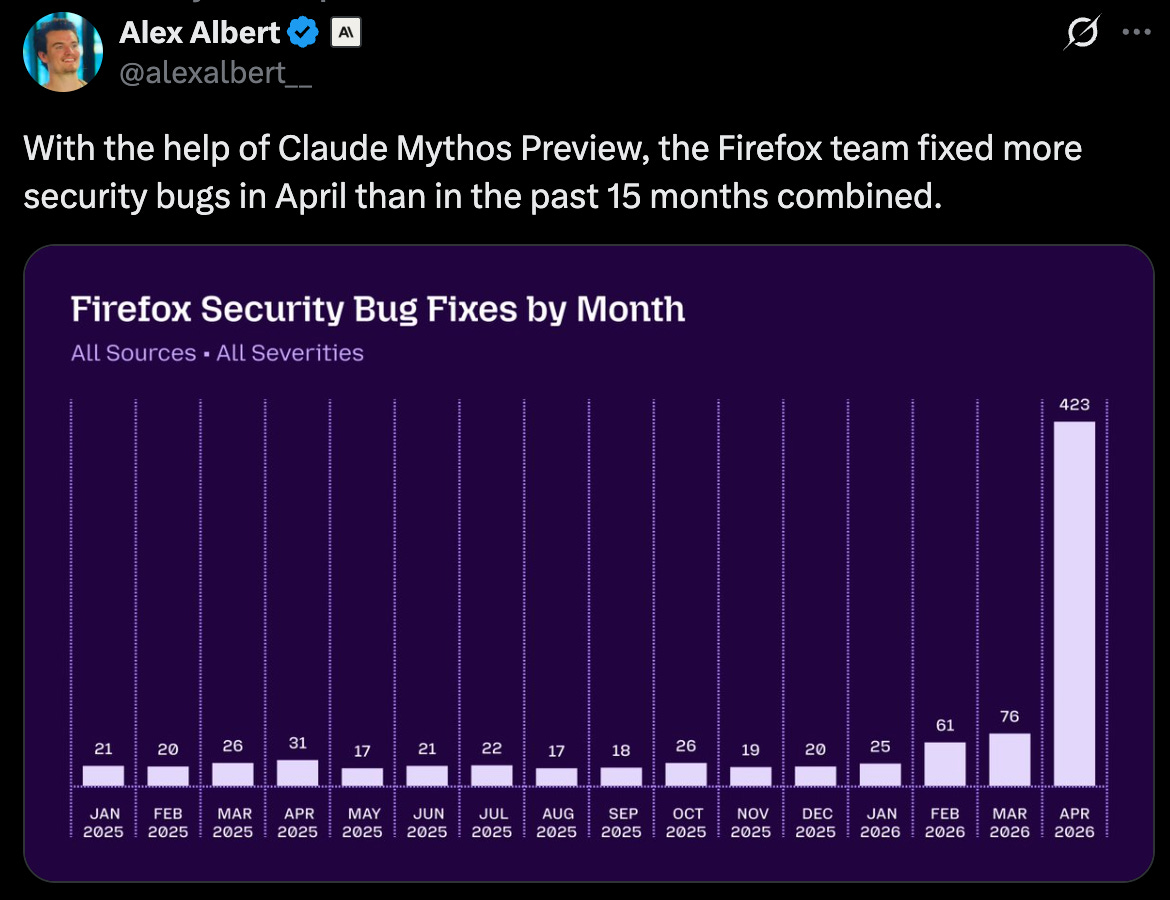

This is quite impressive. Cybersecurity is becoming the new vanguard of where AI is showing immense capability & value. You can check out our previous piece on Mythos to learn more about it.

Wow. Narrative violation. Something to keep an eye on. Everyone has been saying that SaaS is dead. But is it really? Don’t think this bounce-back will happen to every listed SaaS company, but those who play in that nice AI-native data infrastructure layer could see more gains than losses.

While we’re seeing America rebuild itself, Australia is going through a very similar transition. Australia’s circumstances are bittersweet because, while the country is blessed by beautiful beaches and an abundant amount of natural resources, over the past several decades, the country has fallen by the wayside in resting on its laurels too much. But now, things are changing, and we’ve finally come to the realization that innovation, science, technology, and even entrepreneurship will be our saving grace.

You should check out (and even join) the initiative here.

If you liked this, please consider sharing this with your network. Thanks for your support!